The component provides a highly efficient way to send messages to AWS SQS queues in Spring Boot applications through intelligent batching and optimized throughput. This article explores how to integrate and leverage this powerful messaging utility in your Spring Boot applications.

Our open source library, sqs-utilities, is available on GitHub here: sqs-utilities

Adding SQS Utilities to Your Maven Dependencies

To include the library in your Maven project, you need to add the appropriate dependency to your file. Based on the project structure, here’s how to configure it:

Maven Dependency Configuration

Add the following dependency to your file’s <dependencies> section: pom.xml

<dependencies>

<!-- SQS Utilities for AWS SQS messaging -->

<dependency>

<groupId>com.limemojito.oss.standards.aws</groupId>

<artifactId>sqs-utilities</artifactId>

<version>15.3.3</version>

</dependency>

</dependencies>

<dependencies>

<!-- SQS Utilities for AWS SQS messaging -->

<dependency>

<groupId>com.limemojito.oss.standards.aws</groupId>

<artifactId>sqs-utilities</artifactId>

<version>15.3.3</version>

</dependency>

</dependencies>Getting Started with SqsPumpConfig

To enable SqsPump in your Spring Boot application, simply import the configuration class:

@SpringBootApplication

@Import(SqsPumpConfig.class)

public class MyApplication {

public static void main(String[] args) {

SpringApplication.run(MyApplication.class, args);

}

}The @Import(SqsPumpConfig.class)

- The main batching component SqsPump

- The underlying AWS SQS client wrapper SqsSender

- JSON serialization with Spring Boot-like configuration ObjectMapper

- AWS SDK v2 client (must be provided as a bean) SqsClient

Basic Usage in Your Components

Once configured, inject into any Spring component: SqsPump

@Service

public class OrderProcessingService {

private final SqsPump sqsPump;

private static final String ORDER_QUEUE_URL = "https://sqs.region.amazonaws.com/account/order-queue";

public OrderProcessingService(SqsPump sqsPump) {

this.sqsPump = sqsPump;

}

public void processOrder(Order order) {

// Add order to batch - efficient, non-blocking

sqsPump.send(ORDER_QUEUE_URL, order);

// Batch will be sent automatically when full or via explicit flush

}

public void forceFlushPendingOrders() {

sqsPump.flush(ORDER_QUEUE_URL);

}

}Understanding Batch Efficiency

The core efficiency of comes from its intelligent batching mechanism powered by the SqsSender.sendBatch() method. Here’s how it optimizes throughput: SqsPump

Note that you should flush(destination) on SqsPump at the end of your batch processing to clear the batch queue.

Automatic Batching Strategy

// Configuration

com.limemojito.sqs.batchSize=10 # Default batch size

The pump accumulates messages until:

- Batch size reached: When 10 messages (default) are queued

- Explicit flush: When you call

flush(destination) - Application shutdown: Via lifecycle hook

@PreDestroy

Efficiency Calculations

For high-volume scenarios, the efficiency gains are significant:

Without Batching (Individual sends):

- 1000 messages = 1000 API calls

- Each call: ~50-100ms latency

- Total time: 50-100 seconds

With SqsPump Batching:

- 1000 messages = 100 batch calls (10 messages each)

- Each batch call: ~50-100ms latency

- Total time: 5-10 seconds

- 90% reduction in execution time

Cost Optimization

AWS SQS pricing is per request, making batching extremely cost-effective:

- Individual sends: 1000 requests × 0.0000004 =0.0004

- Batch sends: 100 requests × 0.0000004 =0.00004

- 90% cost reduction

Advanced Usage Patterns

Multi-Queue Processing

@Service

public class EventPublisher {

private final SqsPump sqsPump;

public void publishEvents(List<Event> events) {

for (Event event : events) {

String queueUrl = determineQueueForEvent(event);

sqsPump.send(queueUrl, event);

}

// Flush all queues at once for maximum efficiency when using multiple destinations. Use flush(queueUrl) for single destination.

sqsPump.flushAll();

}

}FIFO Queue Support

For ordered message processing:

@Service

public class SequentialProcessor {

private final SqsPump sqsPump;

private static final String FIFO_QUEUE = "https://sqs.region.amazonaws.com/account/ordered-queue.fifo";

public void sendOrderedMessage(String groupId, List<Object> messages, String deduplicationId) {

Map<String, Object> fifoHeaders = Map.of(

"message-group-id", groupId,

"message-deduplication-id", deduplicationId

);

messages.forEach(message -> sqsPump.send(FIFO_QUEUE, message, fifoHeaders));

// send any remaining messages

sqsPump.flush(FIFO_QUEUE);

}

}Thread Safety and Concurrency

is designed for high-concurrency environments:

Key Thread Safety Features:

SqsPump: Thread-safe message storage per destination ConcurrentHashMap- :

SqsPump: Lock-free message queuing ConcurrentLinkedDeque - Synchronized flush: Only one thread flushes per destination at a time, no explicit coding necessary.

- Atomic batch operations: Complete batches or failure with rollback

Configuration Options

Application Properties

# application.yml

com:

limemojito:

sqs:

batchSize: 10 # Max messages per batch (default: 10, max: 10 per AWS limits)

# AWS SQS Client configuration

aws:

region: us-west-2

credentials:

accessKey: ${AWS_ACCESS_KEY}

secretKey: ${AWS_SECRET_KEY}

Custom SQS Client Configuration

@Configuration

public class AwsConfig {

@Bean

public SqsClient sqsClient() {

return SqsClient.builder()

.region(Region.US_WEST_2)

.credentialsProvider(DefaultCredentialsProvider.create())

.build();

}

}

Message Attributes and Spring Compatibility

SqsPump automatically adds Spring Messaging-compatible attributes:

{

"MessageAttributes": {

"id": "uuid-generated",

"timestamp": "1640995200000",

"contentType": "application/json",

"Content-Type": "application/json",

"Content-Length": "156"

}

}These attributes ensure seamless integration with:

- Spring Cloud Stream

- Spring Integration

- Spring Boot messaging auto-configuration

Best Practices

1. Flush when needed

After looping, always flush. Messages may be in memory before delivery.

messages.forEach(message -> sqsPump.send(FIFO_QUEUE, message, fifoHeaders));

// send any remaining messages

sqsPump.flush(FIFO_QUEUE);

2. Use Explicit Flushing for Critical Messages

// For time-sensitive messages

sqsPump.send(queueUrl, criticalMessage);

sqsPump.flush(queueUrl); // Immediate send

3. Batch Size Optimization

// For high-throughput: use max batch size

com.limemojito.sqs.batchSize=10

// For low-latency: use smaller batches

com.limemojito.sqs.batchSize=3

4. Lifecycle Management

SqsPump automatically flushes pending messages on container shutdown in @PreDestroy.

Conclusion

transforms SQS messaging in Spring Boot applications by providing:

- 90% reduction in API calls through intelligent batching

- Significant cost savings via reduced request counts

- Thread-safe concurrent message handling

- Spring Boot integration with zero configuration overhead

- Automatic lifecycle management preventing message loss

By leveraging SqsSender.sendBatch() under the hood, delivers enterprise-grade performance while maintaining the simplicity that Spring Boot developers expect. Whether you’re processing thousands of messages per second or need reliable ordered delivery via FIFO queues, provides the foundation for scalable, efficient AWS SQS integration. SqsPumpSqsPump

The combination of automatic batching, thread safety, and Spring Boot’s dependency injection makes an ideal choice for modern cloud-native applications requiring high-performance message queuing.

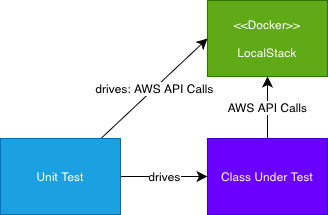

For information on the testing of SQSPump, see our article here.